Introduction

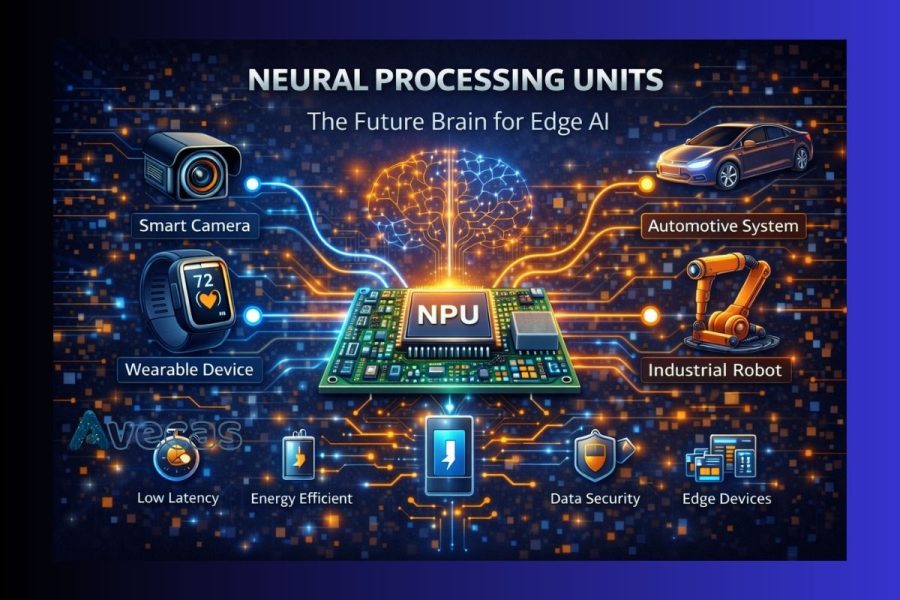

Artificial intelligence is rapidly moving closer to where data is generated, at the edge. From smart cameras and wearables to autonomous machines and industrial sensors, modern devices are expected to analyze data instantly without relying on constant cloud connectivity. This shift has created the need for specialized hardware, and that is where Neural Processing Units, commonly known as NPUs, come into play.

NPUs are purpose built processors designed to handle AI and machine learning workloads efficiently. They are becoming the core computing engine for Edge AI, enabling faster decisions, lower power consumption, and real time intelligence directly on devices. In this article, we explore what NPUs are, why they matter, how they differ from traditional processors, and why they are shaping the future of edge computing.

What Is a Neural Processing Unit

A Neural Processing Unit is a dedicated hardware accelerator optimized specifically for executing neural network operations. Unlike general purpose CPUs or graphics oriented GPUs, NPUs are designed to perform tasks such as matrix multiplication, convolution, and vector operations with maximum efficiency.

These operations form the backbone of AI workloads like image recognition, voice processing, natural language understanding, and predictive analytics. By focusing only on AI specific computations, NPUs deliver higher performance with significantly lower energy usage.

Why Edge AI Needs NPUs

Edge AI refers to running artificial intelligence algorithms directly on local devices instead of sending data to centralized cloud servers. This approach offers several advantages, but it also introduces challenges that NPUs are uniquely suited to address.

Low Latency Requirements

Applications like autonomous driving, industrial automation, and medical monitoring require instant decision making. NPUs process data locally, eliminating delays caused by network transmission.

Power Efficiency

Edge devices often operate on batteries or limited power budgets. NPUs are optimized to deliver high AI performance while consuming far less power than CPUs or GPUs.

Data Privacy and Security

Processing data locally reduces the need to transmit sensitive information over networks, improving privacy and compliance with data protection regulations.

Scalability at the Edge

As billions of connected devices adopt AI capabilities, NPUs make it feasible to scale intelligence across the edge without overwhelming cloud infrastructure.

How NPUs Differ from CPUs and GPUs

While CPUs, GPUs, and NPUs all perform computation, their design goals are very different.

CPU

CPUs are designed for versatility. They handle operating systems, control logic, and general computing tasks, but they are not optimized for heavy AI workloads.

GPU

GPUs excel at parallel processing and are widely used for AI training. However, they consume more power and are less suitable for compact edge devices.

NPU

NPUs are purpose built for AI inference. They execute neural network models efficiently, using specialized data paths and memory architectures that reduce energy consumption and increase speed.

This specialization makes NPUs ideal for always on AI tasks at the edge.

Key Features of Neural Processing Units

Modern NPUs include several features that make them ideal for edge AI applications:

Parallel AI Execution

NPUs process multiple neural network operations simultaneously, delivering fast inference even for complex models.

Optimized Memory Access

Efficient data movement reduces latency and power consumption, which is critical for real time applications.

Support for AI Frameworks

NPUs are designed to work with popular AI models and toolchains, making deployment easier for developers.

Low Power Operation

Advanced power management allows NPUs to run continuously without draining batteries quickly.

Applications of NPUs in Edge AI

Neural Processing Units are already transforming multiple industries.

Smart Cameras and Surveillance

NPUs enable real time object detection, facial recognition, and behavior analysis directly within cameras.

Wearable Devices

Fitness trackers and health monitors use NPUs to analyze biometric data efficiently while maintaining long battery life.

Automotive Systems

Advanced driver assistance systems rely on NPUs for real time vision processing and sensor fusion.

Industrial Automation

Edge AI powered by NPUs improves quality inspection, predictive maintenance, and robotic control.

Smart Home Devices

Voice assistants and intelligent appliances use NPUs to process commands locally, improving responsiveness and privacy.

Role of NPUs in Semiconductor Design

As demand for edge AI grows, semiconductor companies are increasingly integrating NPUs into system on chip designs. These integrated solutions combine CPUs, NPUs, memory, and connectivity into compact, power efficient platforms suitable for edge devices.

This trend is driving innovation in chip architecture, manufacturing processes, and backend optimization to support AI workloads at scale.

Challenges in NPU Adoption

Despite their advantages, NPUs also present challenges:

- Software optimization for different NPU architectures

- Balancing performance with silicon area and cost

- Ensuring compatibility with evolving AI models

- Managing thermal constraints in compact devices

Addressing these challenges requires close collaboration between hardware designers, software developers, and system architects.

The Future of NPUs in Edge AI

NPUs are set to become a standard component in edge devices. As AI models become more efficient and hardware continues to evolve, NPUs will enable smarter, more autonomous systems that operate reliably without constant cloud support.

Future developments will focus on improved performance per watt, better developer tools, tighter integration with other processors, and broader adoption across consumer, industrial, and automotive markets.

Conclusion

Neural Processing Units are redefining how artificial intelligence is deployed at the edge. By delivering high performance AI inference with low power consumption and minimal latency, NPUs serve as the true brain behind modern edge AI systems.

As edge intelligence becomes a necessity rather than a luxury, NPUs will play a central role in shaping the next generation of smart, responsive, and energy efficient devices across industries.